How to Build a Serverless Video Processing Solution on AWS

Pretty much everyone has a camera these days, and that’s probably underestimating. In my office right now, I can count at least 8 cameras, granted some of them aren’t active at the moment. At least two of them are connected to my workstation, however.

As more and more people record and share conference calls, and build content for YouTube, Bitchute, and other video hosting platforms, it’s important to have a reliable, cost-effective, and secure solution to perform automated video processing.

Recording and editing a video doesn’t necessarily mean that you’re “done.” Depending on your target audience, you may need to repackage video content into different formats and bitrates, to ensure the best viewer experience. For example, users on a 3G network might prefer to watch your video content at 480p, to avoid constant buffering. On another hand, someone sitting on their couch at home with a fiber internet connection might want the best 4k (or 8k) viewing experience.

This need led me to create a Serverless Video Processor solution on AWS.

Why Go Serverless for Video Processing?

My objective was to avoid having learners deploy and manage long-lived infrastructure, and instead focus on developing an event-driven data pipeline for video processing. This approach helps keep costs down during periods of low activity, and makes it easier to scale. Because AWS Fargate is a scale-from-zero service, is built on standard container technology, and doesn’t have limits on execution time, I decided this would be the best choice for the compute component of the solution.

Using AWS for Serverless Video Processing

Let’s talk about how I designed this solution from the ground up.

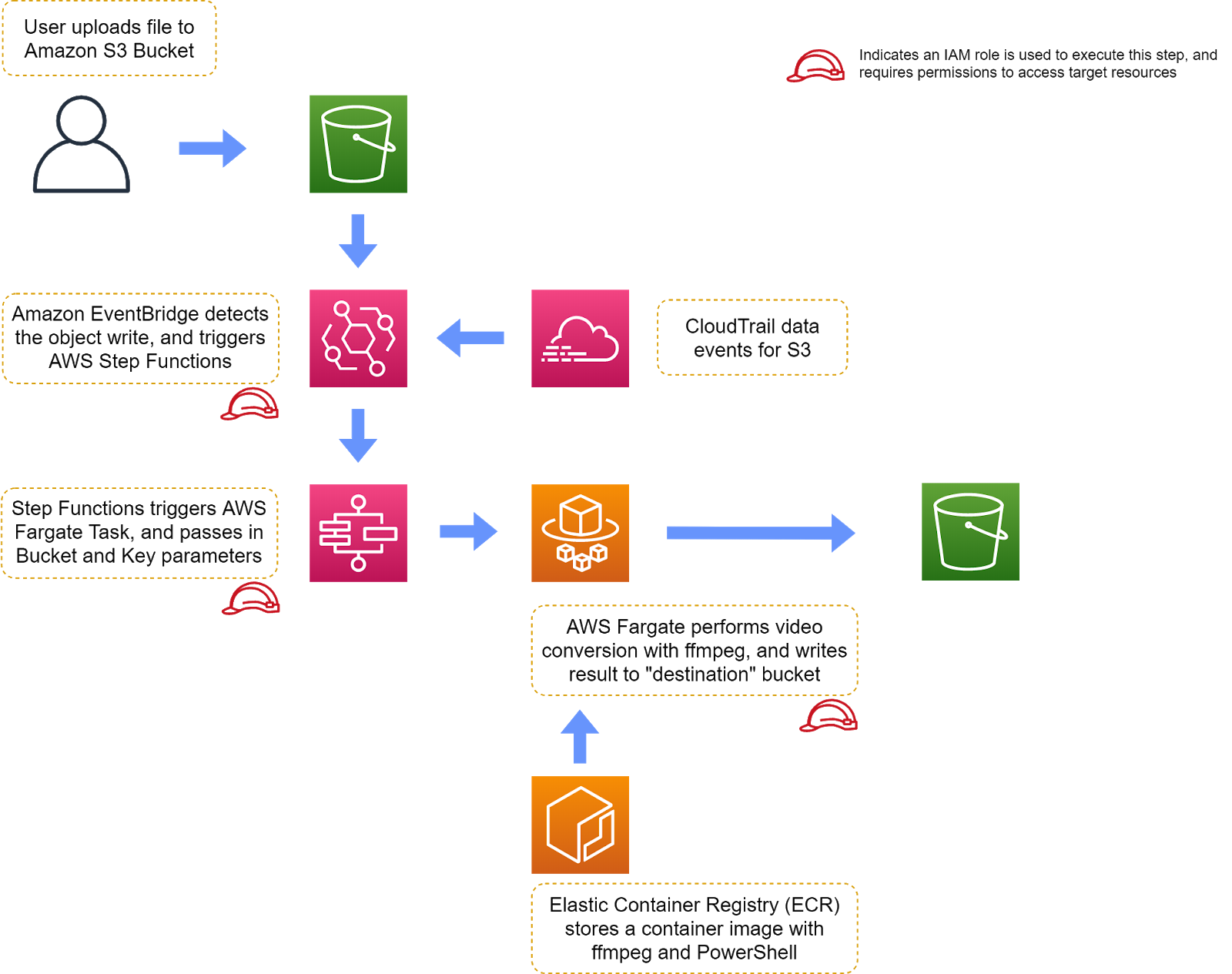

Amazon Simple Storage Service (S3) is the obvious choice for storing video files, so that became my entrypoint into the project. Every time a new file is uploaded into an S3 Bucket, an event should fire that triggers a video processing task. S3 supports a few event triggers, such as SNS topics and AWS Lambda functions, however I wanted to avoid writing as much code as possible, so that left Lambda out of the picture.

Let’s explore the alternative route I selected.

If you have AWS CloudTrail audit logging enabled for your AWS account, you can configure “data events” which are unique from resource management events. When an S3 object is read from or written to, AWS CloudTrail can be configured to log these high-frequency data events in addition to less frequent resource management events, such as creating or deleting an S3 bucket. Once these data events have been configured in AWS CloudTrail, the Amazon EventBridge service can be configured to capture and act on those events.

Once you’ve captured the event in Amazon EventBridge, there is a wider array of AWS services that you can trigger. EventBridge rules give you the flexibility of triggering other services such as Step Functions, Elastic Container Service (ECS), Simple Notification Service (SNS) topics, Simple Queue Service (SQS) queues, EC2 Run Command, and many others.

At this point, you might think I would trigger an AWS Fargate task directly from an EventBridge rule. However, at the moment, EventBridge doesn’t support passing environment variables (via container overrides) directly into a Fargate task.

Hence, I opted to use Step Functions as a middle-man instead. AWS Step Functions is a powerful, managed orchestration service that enables you to stub out workflows and then implement the application logic later on. In this solution, I have a very basic Step Function, which only has one step in it; that step invokes an AWS Fargate task. The S3 bucket and S3 object key that were uploaded are then passed into the AWS Fargate task as container environment variables.

The AWS Fargate task is where the bulk of the video processing / transcoding work is done. Because Fargate deploys tasks from industry-standard container images, it's easy to package up your application, and all of its dependencies, into an OCI-compliant image. The container image can easily be built and tested locally, using Docker Desktop, and once it's ready for production, the image can be pushed into Elastic Container Registry (ECR).

Inside the container image we deploy to Fargate, you'll have the powerful PowerShell automation language, along with ffmpeg, a ubiquitous tool for performing video conversions of all types.

The Outcome: Serverless Video Transcoding

Once the final solution has been deployed, you can upload one or more .mp4 files to the "source" S3 bucket. Each file upload will automatically trigger a series of events, which ultimately causes AWS Fargate to spin up a task (container) that performs a video transcoding job. After performing the video conversion, the PowerShell script running in Fargate will upload the final result to a separate "destination" S3 bucket.

You can then consume the transcoded files from the destination bucket in any way you see fit, for example publishing them on a website, or having another script publish those files to a video hosting service.

AWS Fargate Example: Serverless Video Processing

If you'd like to see how this solution works, from start to finish, then check out my training on this project by logging into CBT Nuggets, searching for “serverless video processor,” and then clicking on the Skills tab. You can also explore the open source project on GitHub.

Thanks for reading!

delivered to your inbox.

By submitting this form you agree to receive marketing emails from CBT Nuggets and that you have read, understood and are able to consent to our privacy policy.